As usual it is easier to explain “by example”:

It is a data mart. They have measures per week and per month, but they don’t have the measure on daily basis. Should we have 2 fact tables, one for weekly and one for monthly, like this: (1)

Or should we create a mixed grain fact table like this: (2)

In the above fact table, the black lines are weekly figures, whereas the red lines are monthly figures. They are both put in the same fact table, but on different measure columns. On the rows where the weekly measure exist, the monthly measure is zero. And vice versa. Therefore weekly and monthly total are correct:

select D.Week, sum(F.WeeklyMeasure) from FactMixedGrain F

join DimDate D on F.DimDate = D.DimDate group by D.Week

join DimDate D on F.DimDate = D.DimDate group by D.Week

select D.Month, sum(F.MonthlyMeasure) from FactMixedGrain F

join DimDate D on F.DimDate = D.DimDate group by D.Month

join DimDate D on F.DimDate = D.DimDate group by D.Month

Usually the main reason of doing option (2) is to have both the weekly and monthly measures in 1 fact table. This is done to save development effort, particularly ETL effort. It is easier to populate just 1 table than two tables.

That’s the advantage. Now the disadvantages. The issue with option (2) is that the grain of the fact table is mixed. There are 2 grains. In practice we have other dimension key columns in the fact table. And we also have other measure columns in the fact table. Some of these measures are weekly and some of them are monthly. Perhaps some of these measures are daily.

The issues with a fact table with a mixed grain like that are:

- When the reporting from the table, we are risking mixing measures with different grains in the same formula, resulting in meaningless result.

- The DateKey is used to indicate both the end of week and the end of month. This requires certain logic in the ETL, which prolong its development.

- In the fact table we have black and red lines, indicating weekly and monthly grain. Black for weekly and red for monthly. We may end up having yellow lines too, for daily. And blue lines too, for quarterly. Oh, and green lines too, for yearly. After that, it will be very confusing. When we query the fact table, we wouldn’t be able to tell which line is which.

- When we join this fact table with another fact table (drilling across), we are risking joining the wrong rows.

In option (2) above, the date dimension is used for 2 different grains in the fact table. Other examples like this are:

- Product dimension used at the product group level and at the product category level.

- Customer dimension used at the company level and at the group level.

When we have 2 or 3 dimensions, with each has dual grain like that, it becomes really confusing and risky.

In the above example, the same dimension key column has dual grain. In other cases, the mixed grain in the fact table is caused by different dimension key columns. One measure correspond to dim1, where as another measure correspond to dim2. Where measure1 has value, measure 2 is 0. And vice versa, like this:

And so it becomes really confusing. So I would recommend avoiding this design, mixing measures with different grains into the same fact table. Instead, it is better to put them into separate fact tables.

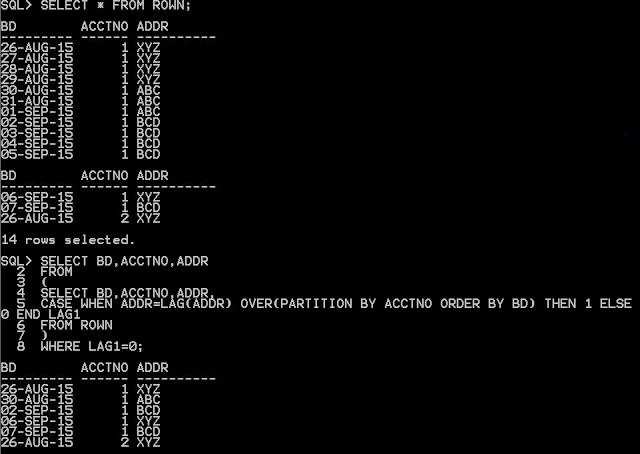

INS INTO KBB VALUES('2014-01-01','A');

INS INTO KBB VALUES('2014-01-02','A');

INS INTO KBB VALUES('2014-01-03','D');

INS INTO KBB VALUES('2014-01-04','A');

INS INTO KBB VALUES('2014-01-05','D');

INS INTO KBB VALUES('2014-01-06','A');

FROM KBB